No Calculators, Please

If AI can score 97% on a full CS degree, was the output ever really what we were trying to teach?

I. The Benchmark

Almost everyone who went through the American education system has heard some version of the phrase. It was printed at the top of tests, announced at the start of exams, repeated by teachers who genuinely believed they were protecting something important. No calculators, please. The reasoning was always the same: if you let students use a calculator, they'll never learn to do the math themselves. The calculator becomes a crutch. Their foundation crumbles.

This argument has resurfaced, in almost identical form, every time a powerful new tool has entered education. Pocket calculators in the 1970s. Graphing calculators in the 1990s. The internet, Google, Wikipedia, smartphones. Each time, institutions drew a line. Oftentimes, they missed the mark and had to redraw it later. But the conversation always had the same shape, the same fears, and the same eventual resolution: the tool isn't going away, so we need to figure out what's still worth teaching by hand.

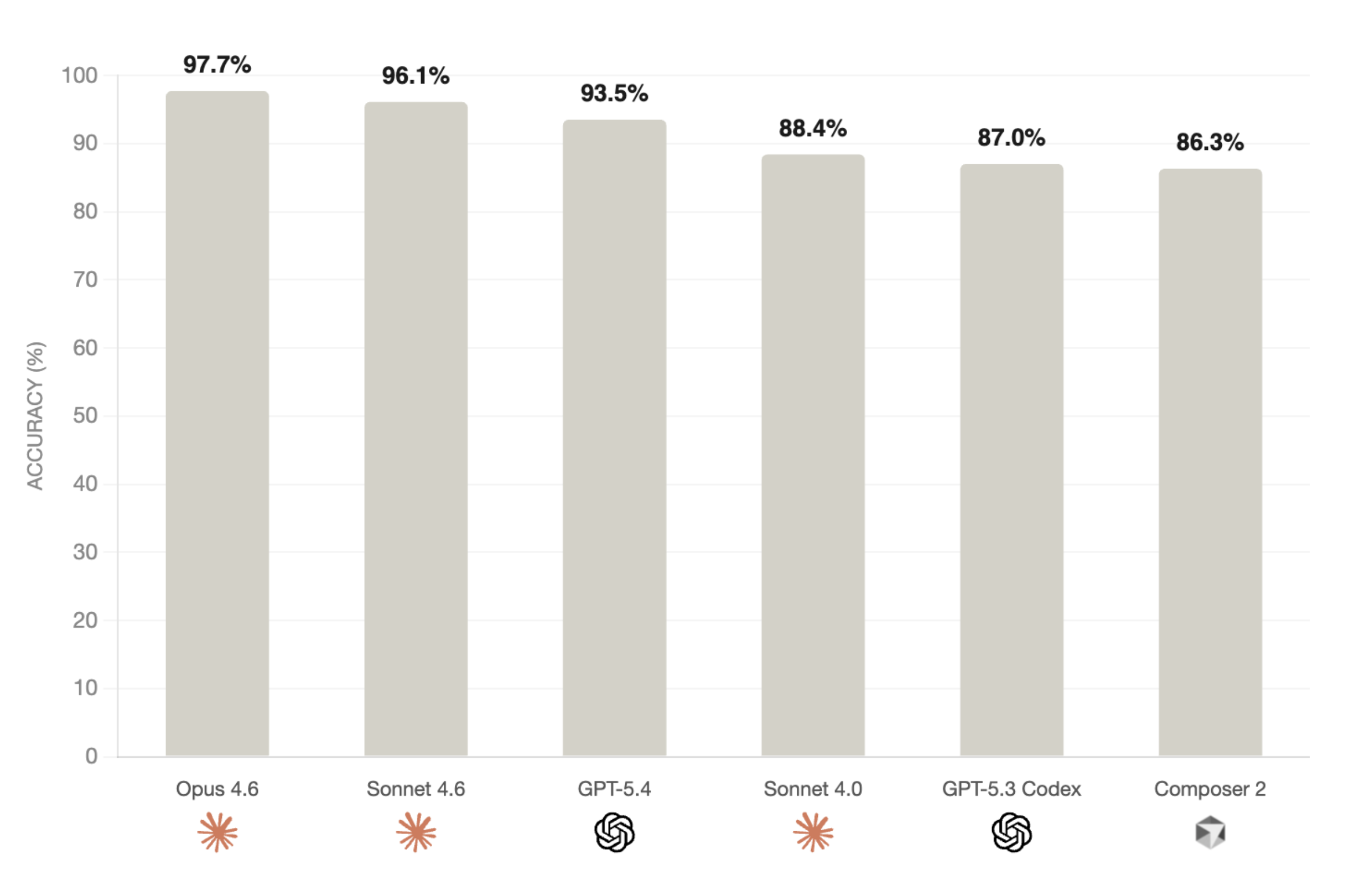

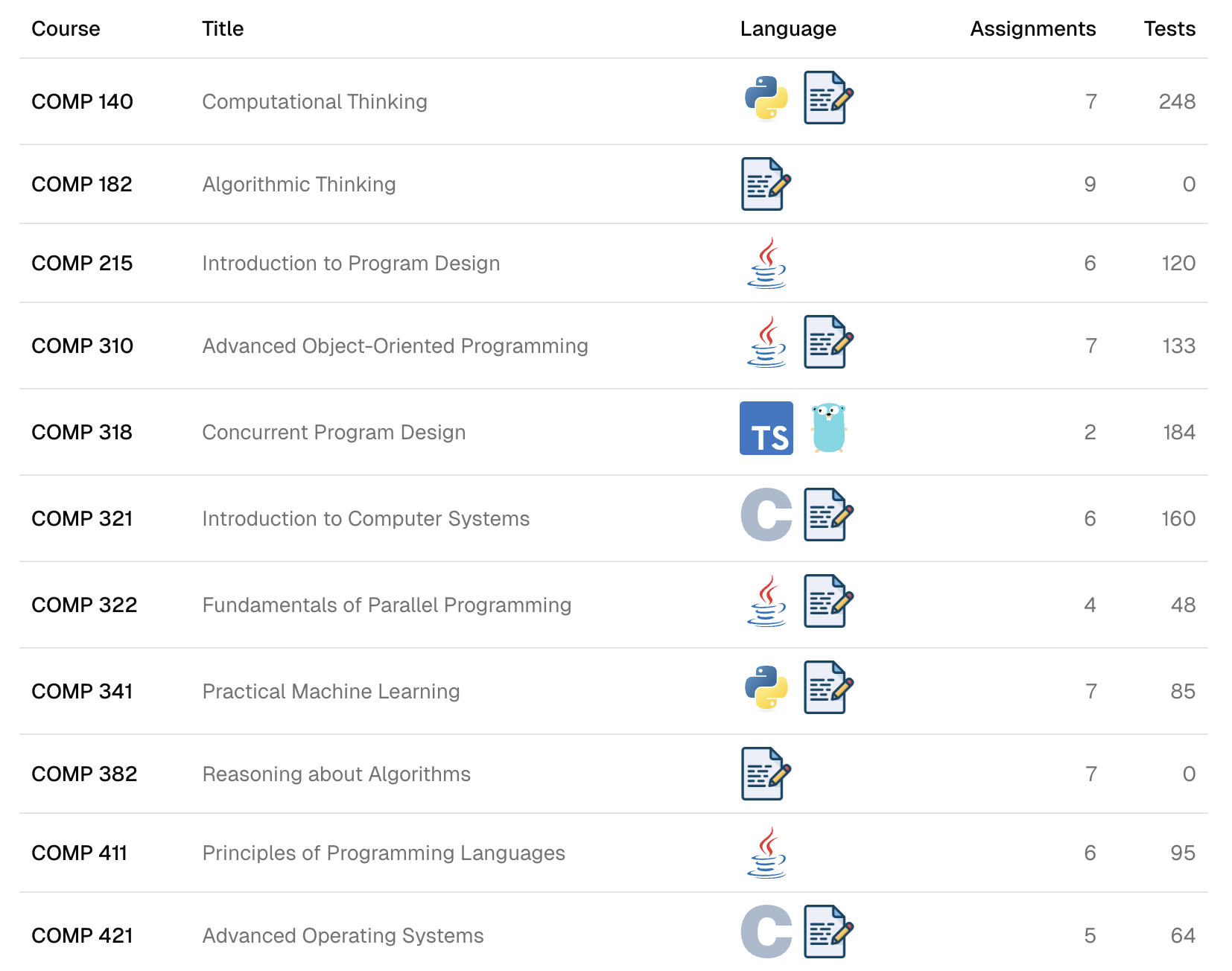

I graduated from Rice University in 2024 with a B.S. in Computer Science. Recently, I collected all the assignments from eleven courses that compose the CS degree, all of which (except COMP 318) I took myself. I packaged them into an automated benchmark, gave various AI agents the same instructions the students receive, and let them work through the entire degree. I call it BSCS-bench, and the results are publicly available. The top models scored 97% on the automated test cases (roughly a 3.92 GPA when adjusted to the course grading scales and including AI-graded work), in under thirteen hours, for less than $140 in compute costs.

This raises a question: if the tool can produce the output, was the output ever really what we were trying to teach? Or were we trying to teach something deeper and using the output as a proxy because we didn't know how to measure the deeper thing directly? We need to talk seriously about what that deeper thing is, whether our current system actually teaches it, and what changes when the proxy becomes trivially easy to generate.

This essay is my attempt to work through that. Education has always been about teaching people how to think. For a long time, we've been using assignments and tests as proxies to measure this because we didn't have a better way. Now, AI has broken those proxies, and we need to find a new way to measure understanding.[1] First, I'll analyze the history of a remarkably similar debate we've already lived through. Then, I'll look towards what comes next.

II. We've Been Here Before

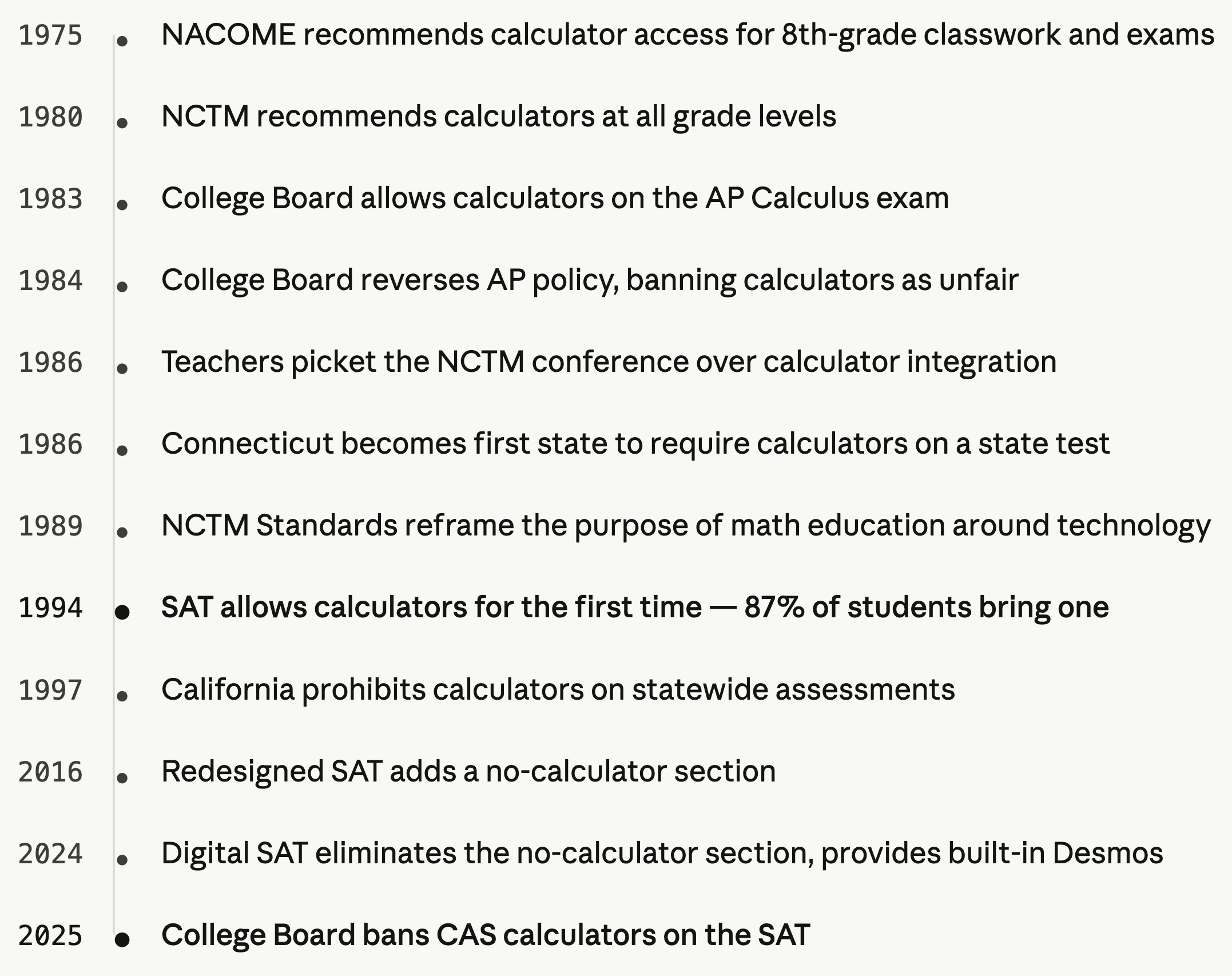

The calculator debate in American education parallels today's AI discourse very closely. By 1975, there was roughly one calculator for every nine Americans, and the National Advisory Committee on Mathematical Education was already recommending that students in eighth grade and above should have access to them for all classwork and exams.[2] Five years later, the National Council of Teachers of Mathematics went further, recommending that math programs at every level should "take full advantage of calculators."[3] But adoption was slow and deeply contentious. By the mid-1980s, fewer than half of math teachers were using calculators in their classrooms, and even those who did often limited them to checking answers.[4]

The arguments against calculators will sound familiar to anyone following today's AI discourse. Teachers worried that students would become dependent on the devices and lose the ability to think through problems on their own. Parents worried about a generation that couldn't do basic arithmetic. A former NCTM president expressed concern that students would "forget how to think on their own or solve problems."[5] In 1986, teachers actually picketed the NCTM's annual conference over a position statement urging calculator integration at all grade levels.[6] And of course, there was the classic line that an entire generation of students heard from their teachers: "You're not always going to have a calculator with you." It was a reasonable-sounding argument in 1985. Today, every person reading this has a calculator in their pocket that is also a camera, a library, and a connection to the sum of human knowledge.

The fear was straightforward: if you give students a tool that does the hard part for them, they'll never learn to do the hard part themselves. In my opinion, this wasn't entirely unreasonable. When calculators were first becoming ubiquitous and affordable, one couldn’t assume they’d have access to one every time they needed to do math. But the conversation about whether calculators were good or bad often obscured the more important question: good or bad for what? If the goal of math education is to produce students who can perform long division quickly and accurately, then calculators are obviously a threat. But if the goal is to produce students who understand mathematical reasoning and can apply it to real problems, calculators are an asset. They free up time and cognitive effort for more important work.

The NCTM's 1989 Curriculum and Evaluation Standards tried to resolve this by reframing the entire purpose of math education. The Standards declared that technology had "changed the very nature of mathematics" and recommended that appropriate calculators should be available to all students at all times.[7] The NCTM envisioned a classroom that looked more like a laboratory, where students would discover and test conjectures rather than drilling procedures by hand.[8]

The real tipping point came in 1994, when the College Board allowed calculators on the SAT for the first time. In the first year, 87% of students brought one. By 1997, 95% did.[9] The impact was immediate. The College Board's own research showed that graphing calculator users outperformed scientific calculator users.[10] A professor at the University of California argued in the New York Times that calculators gave wealthy students an unfair advantage, because affluent families could afford advanced models while students without regular access would waste test time just figuring out the device.[11]

The SAT's relationship with calculators continued to evolve. In 2016, the College Board added a no-calculator section, arguing that knowing when not to use the tool was itself an important skill.[12] In 2024, the digital SAT reversed course, eliminating the no-calculator section and giving every student a free built-in Desmos graphing calculator.[13] Then, in August 2025, they banned all CAS calculators, devices that can manipulate algebraic expressions symbolically and produce exact solutions rather than decimal approximations. The College Board determined that CAS functionality "can affect accurate assessment of the math abilities that the SAT Suite is designed to test."[14]

However, the College Board still allows CAS calculators on AP exams.[15] This is because AP exams are designed to measure understanding of concepts that CAS calculators can’t solve end-to-end. The exam tests the student’s ability to understand the problem, compose it into a mathematical representation themselves, and only then utilize their algebra skills to find the solution. The SAT measures whether or not a student can do algebra by hand, but AP exams assume this as a prerequisite, thus making it logical to allow CAS calculators as a tool.

While the calculator debate has gone on for decades now, I think the College Board has learned their lesson. Focus first on what you are trying to test, then choose the allowed tools accordingly. For 5th grade times tables tests, don’t allow a calculator. For an algebra class, allow all calculators that can’t solve algebra equations end-to-end. For even more advanced courses where algebra is a prerequisite, students should use CAS tools to abstract away the algebraic manipulation and focus on the problem at hand.

AI will force us to move the line again, and much further this time. In computer science, AI doesn't just speed up coding the way a calculator speeds up arithmetic. It can do everything. And you can't ban it in practice, because every student has access to it on the same device they use to do their homework. Given how much more powerful AI is than previous tools, I think it’s important to reconsider what skills we’re attempting to measure and if the assignments we use to measure these skills will remain good proxies for understanding.

III. What’s Worth Learning Anymore?

We must ask which parts of the pedagogical process are essential to learning the underlying skill and which parts are mechanical overhead that the tool can handle. The answer is that this boundary has always been fuzzy, always been contested, and always moved over time as tools improved.

I think most people can identify points where they feel confident. I'm quite confident we should still teach mental addition, subtraction, and multiplication tables. There's something foundational about number sense that you can only develop by working with numbers directly. I'm equally confident we don't need to teach anyone how to compute the square root of 7,291 to four decimal places using the Babylonian method.

The most difficult part is the middle. Should students learn long division? I could hear good arguments on both sides. The College Board went back and forth on essentially this question, allowing calculators in 1994, adding a no-calculator section in 2016, then removing it in 2024. Each decision was a judgment call about where the line fell given the tools available. None were permanent.

A lot of universities' current answer to AI is some version of restoration. Ban the tool. Tighten surveillance. Push work back into the classroom. I understand the instinct, but I don't think it solves the problem.

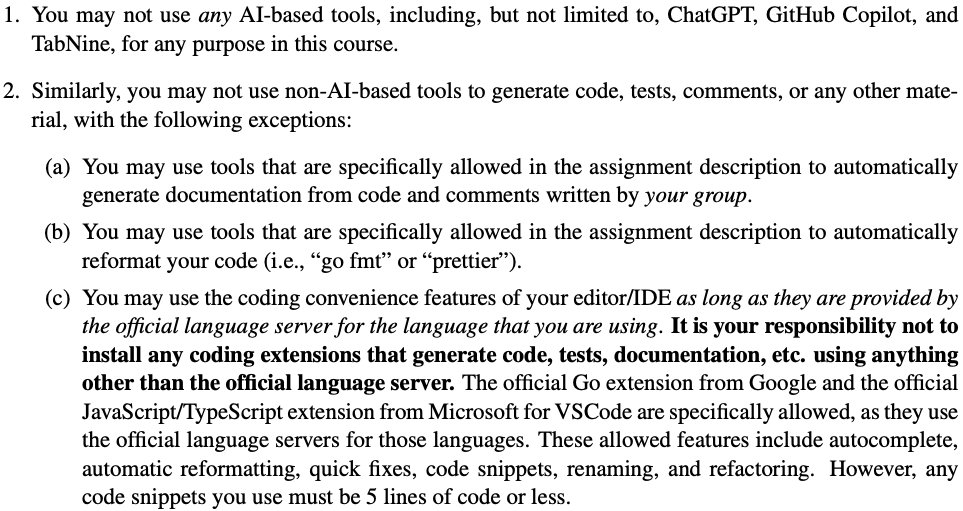

One of the clearest examples I know is COMP 318 at Rice, one of the hardest courses in BSCS-bench and one of the classes with a full, unilateral AI ban. Students have reported they need to turn off Copilot's tab autocomplete before going to office hours so a TA doesn't report them. We're not even talking about agentic coding with frontier models. We're talking about the most basic forms of machine assistance, the kind of thing that starts to blur almost immediately into tools we've already normalized.

This is a snippet from the COMP 318 syllabus outlining the rules. The last line is the most telling: an arbitrary limit on auto-generated code snippets being 5 lines or less. Really? If Copilot tab completion is forbidden because it helps too much, why isn't the language server? Will 6 lines of auto-generated code get you referred to the Honor Council? Why is syntax highlighting fine? At some point the line stops looking principled and starts looking like "I dislike the new tool but not the old ones I'm used to."

I’m not trying to dunk on one course. I bring it up because it reveals what happens when institutions try to preserve legacy assessments in a world where the tool boundary is moving underneath them. They are forced to make finer and finer distinctions between acceptable and unacceptable assistance, and those distinctions often rest more on familiarity than on a coherent theory of what thinking actually is. That's not a stable place to build policy.

In computer science, I think the same framework applies, but I find myself less certain about what the foundations are. My initial instinct was that we still need to teach what a variable is, what a loop does, what recursion is, and how to reason about algorithms using basic discrete math and logic. Not because these technical concepts are intrinsically important, but because they're a good vehicle for teaching first-principles thinking and logical reasoning. Those skills are infinitely more important than technical skills in our imminent future, and CS education has historically been relatively good at teaching them.

But as I write this, I'm starting to doubt that traditional CS education is really the best vehicle for that. How hard is it to understand the concept of a loop? I doubt students will really need to know the difference between a for and a while loop. However, I'm extremely confident they'll need to know how to communicate the difference between "do this thing x times" and "do this thing until this other thing happens" to an AI model. That distinction matters, and it requires clear thinking. But it's not obvious that you need a semester of Java to get there. Do we need to continue teaching our formalized, programming-oriented versions of these concepts, or is teaching students how to clearly communicate their ideas enough? I genuinely don't know. I will say that my background in CS makes me a much better AI-assisted builder than I would be without it, even though I was never an amazing traditional programmer. The mental models matter. But I also wonder whether the inevitable Claude Opus 5.5, GPT-6.2-Codex, or Gemini 4.1 Preview models will need me to have those mental models at all, or whether they'll meet me wherever I am.

Even with that uncertainty about the foundations, the stuff that's clearly past the line is much easier to identify. Low-level memory management in C. Manual implementation of data structures from scratch. Pointer arithmetic and system call interfaces. Much of this was already being automated by high-level languages like Python and JavaScript before AI entered the picture. These languages became dominant precisely because they moved the boundary between human work and machine work. AI is doing the same thing at a much higher level of abstraction.

Whatever the right balance is today, it will be different soon, because the pace of change is unlike anything we've seen in previous tool transitions. The calculator debate played out over decades. A TI-84 in 2005 was not dramatically more capable than graphing calculators from 1995. Institutions had time to observe, debate, adjust, and observe again.

AI is not following that pattern. The capabilities are improving on a timeline measured in months. Three years ago, the best language models struggled with elementary arithmetic. Today they score 97% on a full university CS curriculum. These models couldn't do assignments like this when I was a student just two years ago (and believe me, I tried). Previous tool transitions always left a clear residual layer of human skill that the tool couldn't touch. Calculators couldn't do algebra. CAS couldn't do proof-writing. High-level languages couldn't do system design. With AI, it's not clear where that ceiling is, or whether there is one. And the pace is still accelerating: OpenAI described GPT-5.3-Codex as "the first model that was instrumental in creating itself," with early versions used to debug their own training and manage their own deployment.[16] When the tool starts improving the tool, linear extrapolation stops being a useful way to plan.

We don't have thirty years to figure this out. We might not have five. This question is now staring down every CS department in the country, and most of them haven't even started.

IV. What Instead of How

There’s a distinction I keep coming back to: the difference between knowing how to do something and knowing what to do. For most of the history of CS education, these were bundled together. You couldn't demonstrate that you knew what to build without actually building it. If you could produce working code, that was strong evidence you understood the problem, because there was no shortcut.

AI has unbundled these. You can now produce working, correct, efficient code by describing the problem clearly and evaluating the output. This is what I did when I built BSCS-bench. The project involved designing a benchmark harness, orchestrating AI agents across courses, parsing heterogeneous assignment formats, running test suites, collecting and scoring results. This would have been a multi-person research effort a few years ago. I built it solo, on nights and weekends, and I never opened an IDE. I never wrote a single line of code by hand. I'm not sure I ever will again.

Here's what I find most exciting about this: these skills are not vocational. Good AI prompting closely resembles clear reasoning, careful judgment, the ability to navigate ambiguity, and knowing when to trust an authority and when to question it. As I demonstrate with BSCS-bench, AI models are incredibly good at implementing solutions to clearly defined problems. I’d argue that defining problems well has always been the real core of engineering, and if we can use tools to abstract away implementation and focus more on asking good questions, that’s a positive outcome.

This means the right response is to redesign assignments around using AI well. Open-ended projects do this much better than repetitive assignments like those found in BSCS-bench. I experienced this in a game development class I took while studying abroad in fall 2023. The instructor encouraged us to use as many AI tools as we wanted. That was the first time I really tried letting AI models write all my code, and I was hooked almost immediately.

The point of the class wasn't to prove that you could manually write every line yourself. The point was to make the best game you could make. In that environment, AI tools were an obvious net positive, so the instructor encouraged students to use them. Students could debug faster, iterate more, and build significantly more ambitious games than they could have without the tools. That class felt much closer to the future of education than the classes trying to hold the line against autocomplete.[17]

I don't know what the full solution looks like, and I'm skeptical of anyone who claims to. The modern CS educational system took decades to build and its problems are deeply structural. But I do think there's a concrete first step that any CS department could take tomorrow: every CS curriculum should have at least one course focused entirely on building with AI tools. Universities should create a space where students are evaluated on defining problems, making tradeoffs, judging output, and pushing AI tools to the limit.

That is only a starting point. If my diagnosis is even mostly right, one class is not the solution. The larger lesson is that institutions need to build the capacity to adapt continuously. The calculator debate took thirty years to settle. AI use in school won't wait that long.

The institutions that survive will be the ones that stop treating their curriculum as fixed and start treating it as something that needs to evolve as fast as the tools their students are using. That includes the evaluation model itself. Assignments, test suites, and GPAs were designed for a world where producing the artifact was the hard part and could serve as a reliable proxy for understanding. That world is ending, and the proxy is broken.

BSCS-bench is my attempt to make this gap legible. The instructions, agent responses, and results are public. Anyone can look at the data and decide for themselves what it means. I expect some people at Rice to be upset by the fact I'm releasing this publicly, and I understand that. But I don't think anyone can look at a model scoring 97% across a full CS curriculum and conclude that nothing needs to change.

The teachers who picketed the NCTM conference in 1986 had good intentions. They were genuinely worried about their students, and some of their concerns turned out to be valid. But calculators didn't go away. The same will be true here, except the timeline is compressed and the stakes are higher.

How much further could we push the world if we embraced this instead of running from it? A student with ambition, a clear vision, and access to AI tools can now build things that were previously out of reach for anyone without a team and a budget. I hope we begin to see AI in the same way we see the calculator: a tool that abstracts away the basics and allows us to focus on what really matters.

Thank you to Aidan Gerber, Noah Spector, and Jake Lazzari for feedback on draft versions of this essay.

BSCS Bench

BSCS Bench